Deploying an Application to a Multi-CSP K8s Architecture

Project AnimalWiki Repos: App Repo - GitOpsAn animal taxonomy explorer has been on the back burner of my projects list. It's an idea inspired by my time looking at wonderful DK animal books with my son. Also, I have recently wanted to get experience with Kubernetes in a multi-CSP architecture. I decided to kill two birds with one stone.

The Application

AnimalWiki is an application that allows a user to navigate animal taxonomy as a visual graph. Informative description and Q&A are first priorities to encourage an educational environment. The stack is an ExpressJS frontend, connecting to Python in the backend which queries a Postgres database for taxonomy information.The Infrastructure

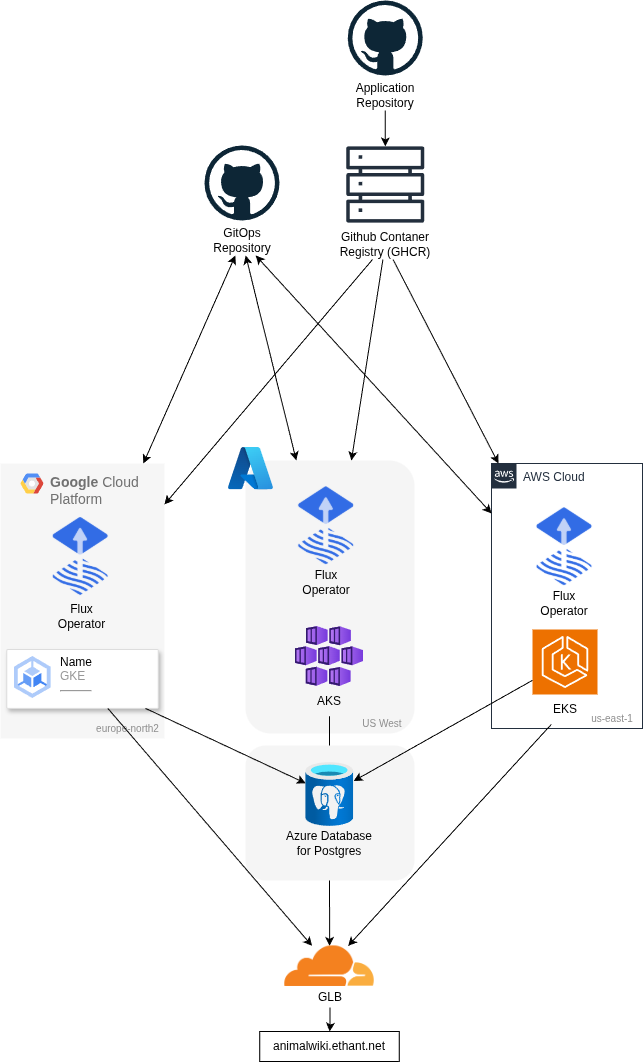

AnimalWiki uses a hybrid monorepo model in Github: the application code is in one repository, GitOps artifacts are in another. Application code changes trigger Github Actions CI to create frontend and backend container images in Github Container Registry (GHCR).K8s clusters in Azure, AWS, and Google Cloud Platform use Flux V2 to reconcile configurations from the GitOps repository in each cluster.

The Flux V2 in the Azure Kubernetes Service (AKS) is also responsible for tracking the GHCR for the latest image and updates the GitOps repository to point to this image. The ImageUpdateAutomation controller from Flux V2 makes this possible.